Today I wanted to share some thoughts on some great new technology that is being actively developed and uses cameras to extract sound and physical property information from everyday objects. I had heard of the visual microphone work that Abe Davis and his cohorts at MIT were working with, but they've gone a step further recently. Before we jump in, I wanted to thank Evan Bianco of Agile Geoscience for pointing me in this direction and asking about what implications this could have for rock mechanics. If you like the things I post, be sure to checkout Agile's blog!

Abe's initial work on the "visual microphone" consisted of using high speed cameras and trying to recover sound in a room by videoing an object like a plant or bag of chips and analyzing the tiny movements of the object. Remember sound is a propagating pressure wave and makes things vibrate, just not necessarily that much. I remember thinking "wow, that's really neat, but I don't have a high speed camera and can't afford one". Then Abe figured out a way to do this with normal cameras by utilizing the fact that most digital cameras have a rolling shutter.

Most video cameras around shoot video at 24 or 32 frames (still photos) each second (frames per second or fps). Modern motion pictures are produced at 24 fps. If we change the photos very slowly and we just see flashing pictures, but around the 16 fps mark our mind begins to merge the images into a movie. This is an effect of persistence of vision and it's a fascinating rabbit hole to crawl down. (For example, did you know that old time film projectors flashed the same frame more than once? The rapid flashing helped us not see flickers, but still let the film run at a slower speed. Checkout the engineer guy's video about this.) In the old days of film, the images were exposed all at once. Every point of light in the scene simultaneously flooded through the camera and exposed the film. That's not how digital cameras work at all. Most digital cameras use a rolling shutter. This means the image acquisition system scans across the CCD sensor row by row (much like your TV scans to show an image, called raster scanning) and records the light hitting that sensor. This means for things moving really fast, we get some strange recordings! The camera isn't designed to capture things that move significantly in a single frame. This is a type of non-traditional signal aliasing. Regular aliasing is why wagon wheels in westerns sometimes look like they are spinning backwards. Have a look at the short clip below that shows an aircraft propeller spinning. If that's real I want off the plane!

But how does a rolling shutter help here? Well 24 fps just isn't fast enough to recover audio. A lot of audio recordings sample the air pressure at the microphone about 44,200 times per second. Abe uses the fact that the scanning of the sensor gives him more temporal resolution than that rate at which entire frames are being captured. The details of the algorithm can get complex, but the idea is to watch an object vibrate and by measuring the vibration estimate the sound pressure and back-out the sound.

Sure we've all seen spies reflect a laser off glass in a building to listen to conversations in the room, but this is using objects in the room and simple video! Early days of the technology required ideal lighting and loud sounds. Here's Abe screaming the words to "Mary had a little lamb" at a bag of potato chips for a test.... not exactly the world of James Bond.

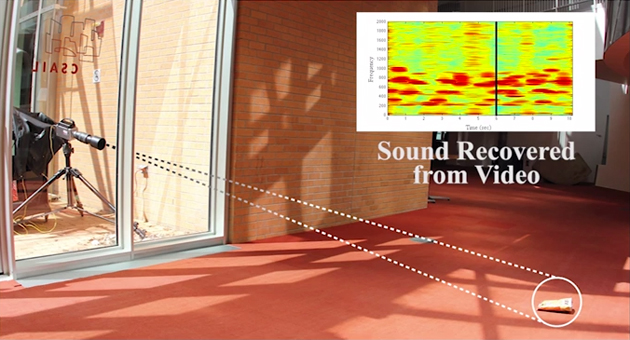

As the team improved the algorithm, they filmed the bag in a natural setting through a glass pane and recovered music. Finally they were able to recover music from a pair of earbuds laying on a table. The audio quality isn't perfect, but good enough that Shazam recognized the song!

The tricks that the group has been up to lately though are what is the most fascinating. By videoing objects that are being minority perturbed by external forces, they are able to model the object's physical properties and predict its behavior. Be sure to watch the TED talk below to see it in action. If you're short on time skip into about the 12 minute mark, but really just watch it all.

By extracting the modulus/stiffness of objects and how they respond to forces, the models they create are pretty lifelike simulations of what would happen if you exerted a force on the real thing. The movements don't have to be big. Just tapping the table with a wire figure on it or videoing trees in a gentle breeze is all it needs. This technology could let us create stunning effects in movies and maybe even be implemented in the lab.

I've got some ideas on some low hanging fruit that could be tried and some more advanced ideas as well. I'm going to talk about that next time (sorry Evan)! I want to make sure we all have time to watch the TED video and that I can expand my list a little more. I'm thinking along the lines of laboratory sensing technology and civil engineering modeling on the cheap... What ideas do you have? Expect another post in the very near future!